What gets measured first

Teams usually validate visualizer starts, session depth, and post-interaction buying behavior before they claim broader conversion impact.

Case studies

These examples stay anonymized where needed, but they are written around concrete implementation detail: what launched first, what the team measured, and which commercial workflows improved before anyone talked about headline metrics.

What gets measured first

Teams usually validate visualizer starts, session depth, and post-interaction buying behavior before they claim broader conversion impact.

What matters operationally

Support load, quoting speed, sample request quality, and the number of vague fit questions are often the first workflows that improve.

What shapes the result

Catalog quality, launch discipline, and rollout prioritization affect outcomes more than generic design polish.

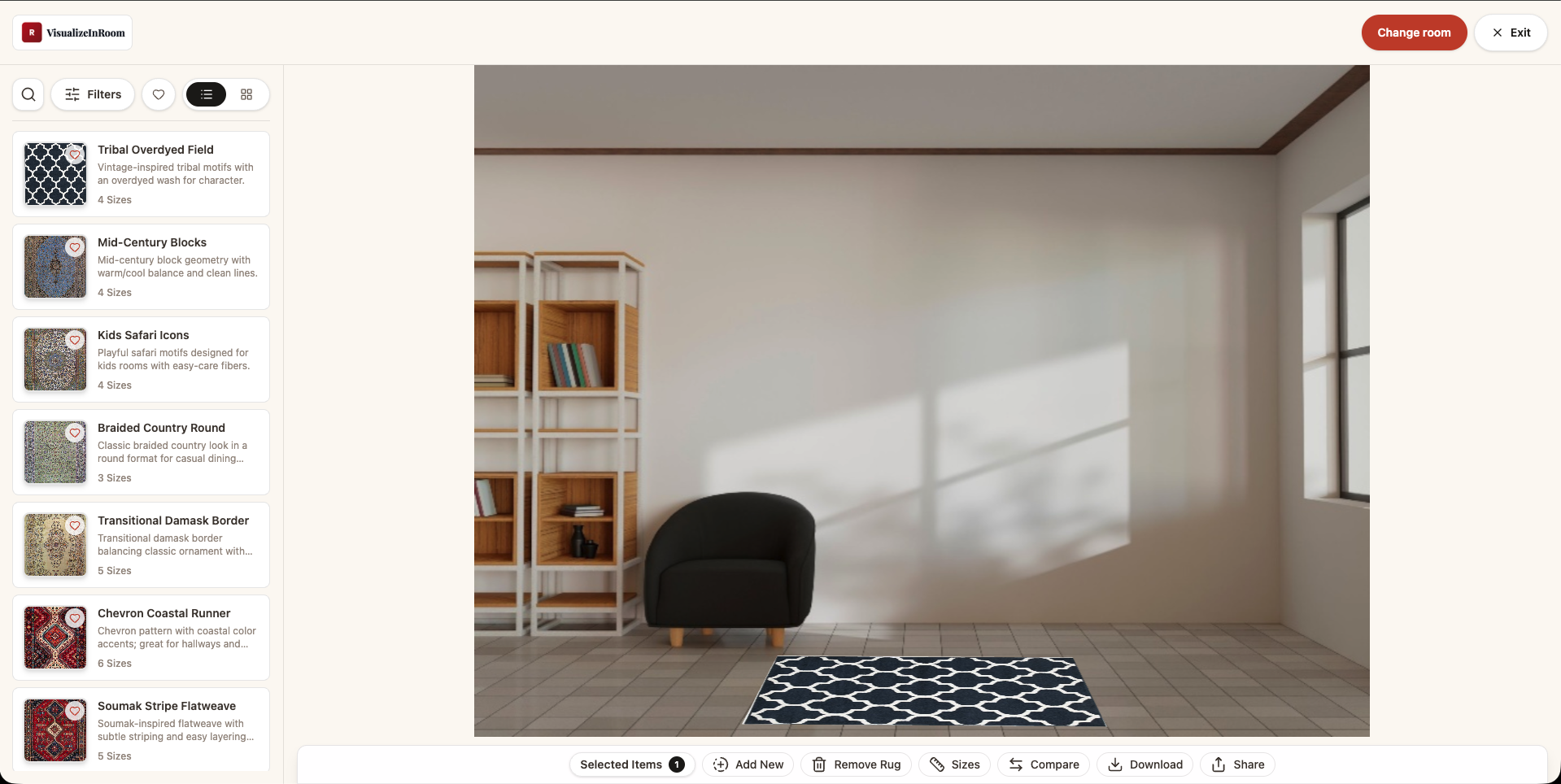

Anonymized ecommerce rollout

The team did not start with every SKU. They launched first on a product subset with clean dimensions, publishable imagery, and clear merchandising ownership.

Implementation detail

What changed first

The first signal was not a headline metric. It was cleaner product conversations: fewer vague size questions, stronger comparison behavior, and more confidence around which collections deserved deeper rollout investment.

Anonymized assisted-selling rollout

A multi-category retail environment needed a faster way to compare products during consultations and follow-up conversations.

Implementation detail

What changed first

The quality of the sales conversation improved first: shorter explanation loops, more intentional sample requests, and clearer progression from comparison to quoting.

Book demo

We will use your current catalog and selling workflow to discuss what proof should be measured first, which rollout path makes sense, and how to avoid a demo-first launch with no operational follow-through.